LATASHA

ART DIRECTOR/CREATIVE DIRECTOR/ARTIST

CASE STUDY

What It Was

A live, real-time concert experience featuring the performer Latasha, built as a platform validation showcase for next-generation performer systems. The show functioned as a full-stack testbed for new avatar aesthetic design and technologies, real-time capture pipelines, modular content systems, and live performer-audience interaction - deployed simultaneously across PC and VR

Why It Mattered: Modular Thinking, Lower Friction, Faster Production, Aesthetic Identity

Served as a foundational platform milestone, validating core systems that would later become part of the scalable performer and platform architecture. The project established early proof for modular construction workflows, cross-platform parity, real-time interaction models, and next-generation avatar pipelines - forming the aesthetic and technical groundwork for future platform development rather than a one-off show build.

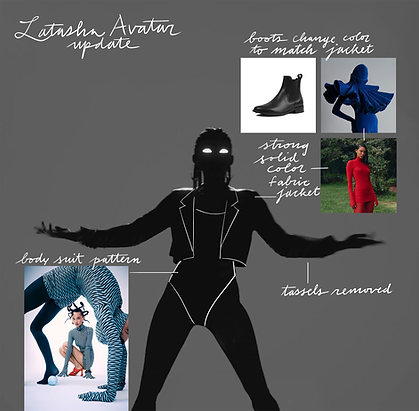

For example this involved development of performer avatars that could be customized quickly - within two days, whereas the previous method of avatar development was based on a custom modeling, texturing and skinning, focusing on hyper-particular likeness.

This format ended up being very satisfying to Meta and to the performer artists.

As a result of this development, the performer avatar went from hundreds of hours across several teams, to only needing one quick piece of concept art and one technical artist to implement that direction. Because the avatars used a consistent base, the motion capture testing burden went down from weeks to being entirely integrated into the performer's rehearsal process.

Why It Mattered - More Modular Thinking

This modular framework was also applied to environment creation. In previous shows, environments had been largely one off creations, sourcing assets and design from scratch, involving large teams of outsource artists. As with the performer avatar, the goal with this show was to develop a method of rapid show production, while maintaining or increasing the positive feedback levels of previous expensive time consuming production processes.

We quickly built a library of more abstract 3d forms that could be reconfigured into a wide array of stage designs, with a large set of tiling textures that could be color remapped and combined . This set of mesh and material primitives provided a vocabulary that was wide enough to span genres and artist identities, while giving Wave a defined aesthetic that could be driven by audio reactive systems and input from the performer's mocap data.

EXAMPLES OF TESTING AUDIO DRIVEN MATERIAL ANIMATION AND MESH DEFORMATION:

RESULTS

The methods employed for this product became the new framework for subsequent shows, securing a new round of funding from Meta.

These methods had the benefit of reducing the size of the build, higher levels of musical connection, massively decreasing production time, and a visually consistent identity for Wave experiences. Show production times went down from three to six months, down to several shows a week while increasing user satisfaction.

SOME ADDITIONAL ARTIFACTS: